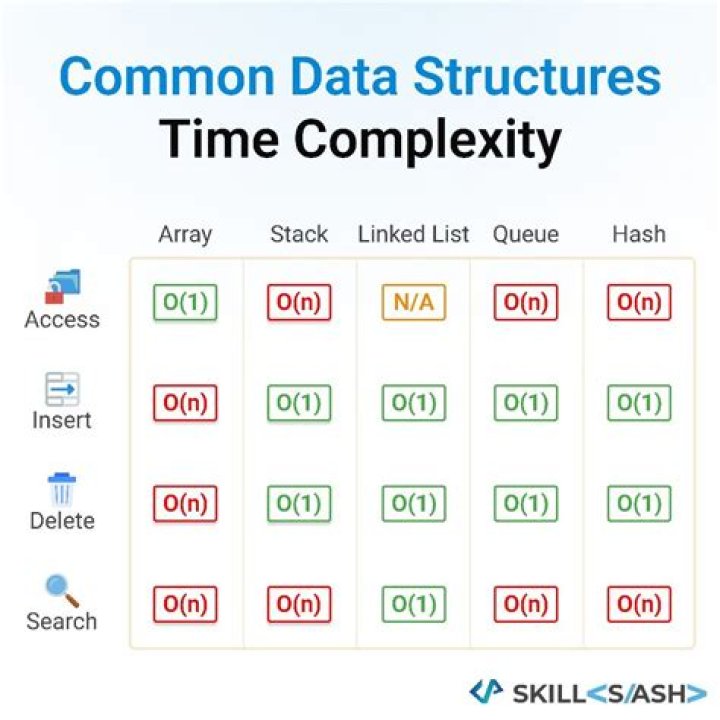

What is the average case time complexity for looking up values in a hash table

What is the search time complexity of an element in hash table?

Hashing is the solution that can be used in almost all such situations and performs extremely well compared to above data structures like Array, Linked List, Balanced BST in practice. With hashing we get O(1) search time on average (under reasonable assumptions) and O(n) in worst case.What are the average and worst case time complexities of hash map lookup?

Hashmap best and average case for Search, Insert and Delete is O(1) and worst case is O(n). Hashcode is basically used to distribute the objects systematically, so that searching can be done faster.What is the average number of comparisons for a successful search in a hash table?

2 The average number of key comparisons for a successful search of an element in the hash table is the (load factor)/2. The worst-case number of comparisons for an unsuccessful search is (load factor).How does a hash table allows for O 1 searching?

As it is known that the hash table stores the elements as key value pair, and the keys of the table are unique, it just takes o(1) time to search the key due to its uniqueness and hence makes it easily accessible.What is the runtime of looking up a value in a hash map when you don’t know the key?

While you can look up the value for a given key in O ( 1 ) O(1) O(1) time, looking up the keys for a given value requires looping through the whole dataset— O ( n ) O(n) O(n) time. Not cache-friendly. Many hash table implementations use linked lists, which don’t put data next to each other in memory.What is the time complexity of looking up a key and a value respectively in a HashMap in Java?

Generally O(1), but if we’re using a bad hashCode function, we need to add multiple elements to one bucket so it can be O(n) in worst case.Why does searching a lookup table take O 1 time?

Look up for an array item O(1). it is independent of size of the array and independent of position. you just enter index number, and item is retrieved. so how much time did look up need to complete.What is o1 time?

It means the running time of an algorithm is a constant. … In short, O(1) means that it takes a constant time, like 14 nanoseconds, or three minutes no matter the amount of data in the set. O(n) means it takes an amount of time linear with the size of the set, so a set twice the size will take twice the time.How hashing works why its complexity is always O 1?

Hashing is O(1) only if there are only constant number of keys in the table and some other assumptions are made. But in such cases it has advantage. If your key has an n-bit representation, your hash function can use 1, 2, … n of these bits. Thinking about a hash function that uses 1 bit.Why are hashes fast?

A primary impact of hash tables is their constant time complexity of O(1), meaning that they scale very well when used in algorithms. Searching over a data structure such as an array presents a linear time complexity of O(n). … Simply put, using a hash table is faster than searching through an array.Why do hash tables have constant lookup time?

Hashtables seem to be O(1) because they have a small constant factor combined with their ‘n’ in the O(log(n)) being increased to the point that, for many practical applications, it is independent of the number of actual items you are using.Is set lookup constant time?

Sets are basically hash tables that store only keys and not values. … It’s a hash table, so constant time lookup in the average case.Are hash tables slow?

Hash functions are slowBut there is something to watch out for: don’t use the traditional modulo based hash function you’ll find in your algorithms text book; for example, here’s Sedgewick’s version, note the “% M” modulo operation that’s performed once per character in the input string.